Do You Just Trust Elevators?

The technology works. Your people know it. The problem is they don't trust it yet and that gap has always been designed, not discovered.

I just got back from HR Tech Asia in Singapore. Stayed at the JW Marriott South Beach which is a remarkable property. I stayed on the 17th floor and at one point felt like I was just doing elevator rides all day long. Somewhere around day two, I noticed a brand I was familiar with, Otis. A technician in full Otis gear, servicing the elevator I’d been stepping into without a single thought.

I pressed 17 and spent the whole ride thinking. Do you just trust elevators?

Think about that for a second. You walk up to a metal box suspended by cables, seventeen floors above the ground, operated by a system you’ve never inspected and couldn’t explain if you tried. The doors open and you step in. You don’t hesitate and you don’t read the certificate on the wall. You press the button and you wait.

What built that trust? When did it happen? Who designed it?

I spent the flight home thinking about those three questions. And then I did something I didn’t expect to do on a long haul back from Singapore. I went deep on elevator history and I’ll be honest, I’d never actually looked into how elevators earned our trust. Turns out it’s one of the better-documented stories in the history of humans and technology and it maps almost perfectly to where we are right now with AI.

Because at HR Tech Asia I’d just spent three days hearing the exact opposite of trust on a loop. Leaders whose people won’t adopt, employees who don’t have confidence the technology works for them and organizations that have bought every tool available and still can’t get anyone to actually use them. These were different companies in different countries and in different industries yet it was the same conversation everywhere I went.

The technology works. Nobody trusts it.

I'd been hearing that for years. But watching an Otis technician work in that Singapore hotel lobby, I finally had the frame for why, and more importantly, for what to do about it and as you know, I love a good analogy.

The Gap Has a Name

We talk endlessly about technology readiness. Is the model accurate enough? Is the infrastructure stable? Can it handle the load? These are real questions. Just not the ones that are actually slowing us down.

What slows us down is the gap between capability and trust and that gap almost never lives in the machine.

Elevators existed before Otis. Freight hoists, steam-powered platforms, pulleys and ropes moving goods between floors for years. The problem wasn’t engineering. The problem was that the rope broke sometimes, and when it did, people died. The rational human response to a suspended platform was: no, absolutely not!

Otis’s safety brake changed the physical reality. A changed physical reality doesn’t automatically earn trust. That’s not how humans work. We don’t update on specs, we don’t update on whitepapers, we update on proof and on witnessing. On trust built slowly, through repeated evidence, in public.

Sound familiar? See I told you I learned about elevator history.

What Otis Actually Did

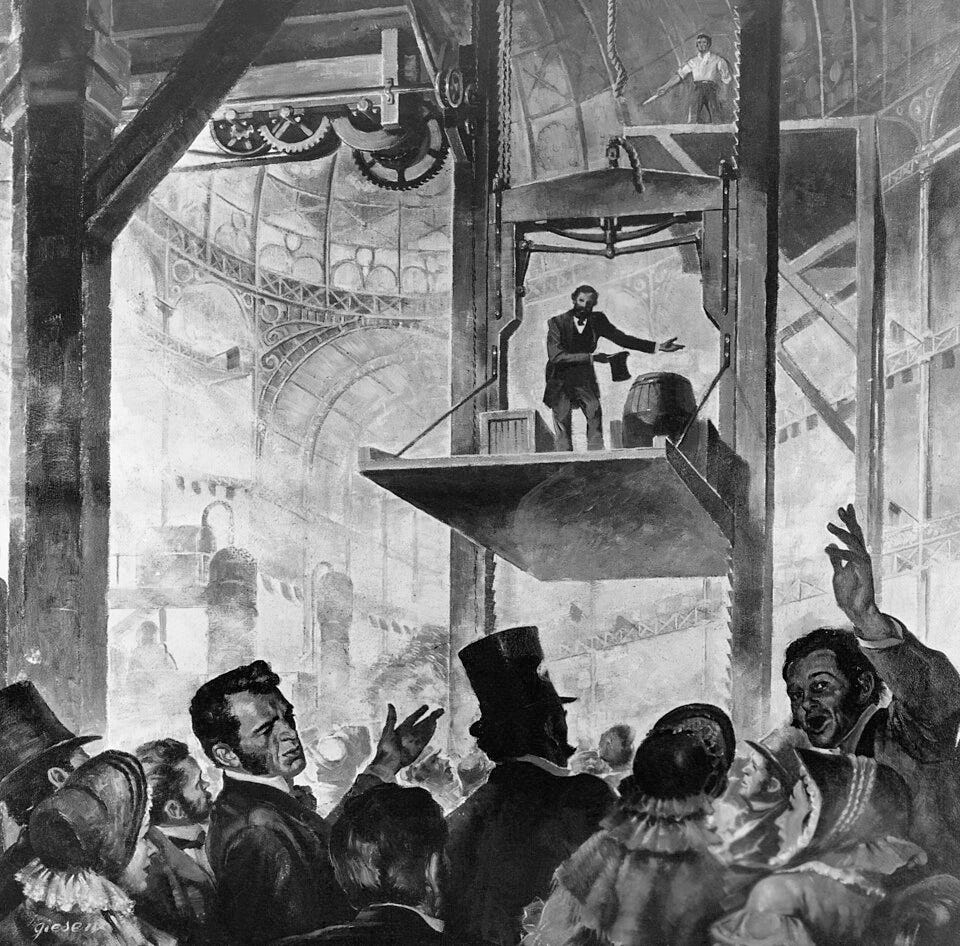

Otis didn’t just show that his elevator worked. He engineered a moment of maximum visible failure and then survived it. Rode the thing himself and had someone cut the rope and stood there while the crowd waited for him to fall.

Some accounts say it was P.T. Barnum who paid Otis $100 to stage the whole thing. Whether that’s true or not almost doesn’t matter. The point is someone understood that the technology needed a showman.

He manufactured danger in order to manufacture trust.

That’s not marketing, that’s changefulness. It’s a completely different understanding of what closing the gap actually requires. You don’t close it with a data sheet. You close it by letting people watch the worst-case scenario play out safely. By replacing “this works, trust me” with “watch it not kill me.”

And even then, it took years. The Haughwout Building in New York installed the first commercial passenger elevator in 1857, three years after the Crystal Palace demo. And those early elevators had human operators. Not because the technology required them, because the humans did.

The attendant wasn’t functional. The attendant was a trust signal. Someone is in control. Someone is responsible. Someone is here if something goes wrong.

We did the same thing with automobiles, with airplanes, with every technology that ever asked humans to hand over control to a system they couldn’t fully see (even employee and manager self-service) to a degree.

What the Industry Built Next

Otis solved the engineering problem in 1854. The industry then spent the next hundred years solving the human problem wrapped around it.

Three things stand out:

The first was the operator. Every early passenger elevator had one — a uniformed human whose job was to run the car, call the floors, and stand between the passenger and the machine. Not because the technology required it. The safety brake worked fine without them but the operator was there because passengers needed a person to hold responsible. It as someone to look at and someone to hold accountable if something went wrong. Trust doesn’t attach to systems but it attaches to people.

The second was music. Starting in the 1920s, elevator cabs began piping in soft background sound — not for entertainment, but to mask the creaks and groans of the machinery at work. Silence amplified every shudder. Every mechanical noise reminded passengers they were suspended in a box by cables. Music gave their nervous system something else to land on. It wasn’t aesthetic, it was behavioral design.

The third was mirrors. Walk into almost any elevator today and you’ll find one. Not installed for vanity. Early elevator cabs were slow, dimly lit, and claustrophobic. Mirrors gave passengers something to focus on besides the walls. They expanded the perceived space and redirected attention away from the machinery and back toward the human.

None of these solved a technical problem. All of them solved a trust problem.

The elevator worked. The industry’s job for decades was making people willing to use it.

That’s the part most organizations skip entirely.

The Playbook

Four things moved the needle. Every single one maps directly to where most organizations are failing with AI right now.

The first is the public moment. Otis didn’t send an email about his safety brake. He rode the elevator himself, cut the rope, and let people watch. Your AI rollout needs the same energy. Not a controlled demo with a friendly audience but a real use case, with real stakes, and real people watching it work. Leaders who use the tools visibly in meetings, in decisions, in front of their teams build more trust in a week than a training program builds in a quarter. You have to cut the rope in public.

The second is scaffolding. Inspection regimes and safety codes didn’t make elevators more capable. They made them trustworthy at scale. Your AI strategy needs the same infrastructure with clear policies, visible governance, guardrails people can see and understand. Not because the technology requires it but because your people do. They need to know someone is checking the work and that someone is accountable. The certificate on the wall matters even when nobody reads it.

The third is the human in the cab. Elevator operators didn’t run the technology. They absorbed the anxiety while the technology earned its credibility. You need the same in your organization right now as managers, who can sit with their teams inside the uncertainty, people who’ve gone first and can say I’ve used this, here’s what I learned, here’s what it won’t do. Not a helpdesk or a support line but a human who’s been on the ride.

The fourth is the short floor. Nobody’s first elevator ride was to the 40th floor. Short floors first with low stakes, high visibility, fast feedback. Pick the use case where failure is recoverable and success is visible. Let people experience the technology in small doses before you ask them to trust it fully. Most organizations do the opposite. They announce the transformation first and build the confidence second.

The elevator industry figured all four of these out over a century. Most organizations are trying to get them all accomplished in a fiscal quarter.

A Note on the Operator

The elevator operator didn’t last forever. By the mid-twentieth century, automation handled what they once did including floor selection, door timing, the smooth stop. Gone from most buildings by the 1970s.

I think about that a lot.

The temptation is to read that as tragedy or proof that the human intermediary was never real, just a crutch. That misses what actually happened. Operators ran elevators for nearly a hundred years. They made the technology accessible to generations of people who would not have trusted it otherwise. They absorbed the anxiety so the technology could mature. That’s not a small thing. That’s the entire adoption curve.

The transitional human matters. Not as a permanent fixture but as a bridge.

The leaders who understand that build better transitions, because they’re honest about what they’re actually asking people to do. You’re not asking your team to trust a system. You’re asking them to trust you, while the system earns its own credibility.

That’s a different ask and it deserves a different answer.

The Real Fear

Most organizations want the trust without the work.

They want people to step into the elevator without anyone cutting the rope in public. They want adoption without the spectacle. They want the Haughwout Building without the Crystal Palace.

And you can get there, for a while by doing things like mandating use, tracking logins, calling it transformation. But if you never close the gap, you end up with a building full of elevators nobody wants to ride.

Think about what the elevator actually meant for the people running freight hoists. Not a new machine, a verdict. The thing you knew how to do, the thing that made you valuable, the thing you’d built a working life around and suddenly the building didn’t need it the same way.

That’s not a technology problem. That’s an identity and relevance problem.

And that’s the fear underneath almost every AI conversation happening in organizations right now. Not “will this work?” The technology works. The real question is quieter and harder:

What does it mean about me if it does?

If the system can do what I do, faster and cheaper, what am I? What’s left?

You can’t answer that with a training course. You can’t answer it with a roadmap. You answer it by redesigning work in a way that makes the human more necessary, not less and by saying that out loud, directly, before your people have to wonder.

That takes belief. Not in the technology but in the people.

We’re in the Same Elevator

AI capability is not the constraint. Say that plainly, because a lot of organizations are still acting like it is.

The models work. The outputs are real. The productivity gains are documented. The technology has already had its Crystal Palace moment. The rope has been cut, the platform didn’t fall, and someone is standing there saying all safe.

Most organizations are still standing in the crowd.

Not because their people are irrational. Because trust doesn’t transfer on a spec sheet. Because the gap between capability and trust is a human problem, not a technical one. Because most leaders are waiting for confidence to show up before they invest in building it.

That’s not how trust works.

Trust has to be designed. You don’t get it by announcing a strategy and waiting for people to come around. You get it by manufacturing the moment, by creating the witnessed proof, by building the scaffolding and by putting the operator in the elevator, even when the technology doesn’t need one anymore.

Your people aren’t afraid of the technology. They’re afraid of what it means for them. They need to watch the worst-case scenario play out safely, in their own context, with people they trust. They need the operator in the cab. They need the short floor first.

That’s not resistance. That’s human. And designing around it is all of our jobs, not just IT.

All Safe

Otis didn’t change how elevators worked. He changed how people felt about getting in one.

That’s the whole job right now. Not the technology, the trust. Not the rollout but the redesign of confidence, at every level of your organization, with the patience and intention it actually requires.

Be the person who cuts the rope in public. Not recklessly but thoughtfully. Safety brake tested and ready with people watching. Full transparency about what you’re doing and why.

The elevator works. Someone has to be first to step in.

Now to Next.

P.S. — If this resonated, the best next step is Now of Work — a weekly gathering of practitioners actively doing this work. Not a webinar. Not a newsletter. A real conversation, every week, with people trying to close the same gap you are. Join us at https://now-of-work-e1a0b5.circle.so/c/now-of-work-weekly-digital-community-call/

P.P.S. — If your leadership team is wrestling with the gap between AI capability and trust and you want to bring this framework into your organization, this is exactly what I work on. Reach out at jason@nowtonext.ai

About Jason

Jason Averbook is the co-founder of Now to Next, an adjunct professor of business, and a globally recognized thought leader, advisor, and keynote speaker working at the intersection of AI, human potential, and the future of work. He spent the last few years as Senior Partner and Global Leader of Digital HR Strategy at Mercer, helping the world’s largest organizations reimagine how work gets done, not by implementing technology but by transforming the mindsets, skillsets, and cultures that have to come first.

Over the last two decades, Jason has advised hundreds of Fortune 1000 companies and their leaders, founded Knowledge Infusion and Leapgen, authored two books on the evolution of HR and workforce technology, and become a world renowned keynote speaker who has delivered hundreds of talks on the future of work. His work challenges leaders to stop treating digital transformation as an IT project and start treating it as a human strategy.

Through his Substack, Now to Next, Jason shares honest, provocative, and practical insights on what’s actually changing in the workplace, from generative AI to skills-based organizations to emotional fluency in leadership. His mission is simple: to help people and organizations move from noise to clarity, from fear to possibility, and from now to next.

You can reach him at jason@nowtonext.ai or connect on LinkedIn.

Elevator-analogy is one of the best ones for tech! And the human operator was present for a looooong time.

That's a brilliant way to look at it. Pushing it a little further, with AI there are still times when the rope breaks and the elevator falls at this point. I'm not sure we've gotten to the place with AI where the entire thing is fall-proof. Or maybe it's just that the elevator installation isn't fall-proof. ...The way we use AI, or create our solutions. But even now in the 2020's, if an elevator fails or falls (which doesn't really happen much), we would jump on maintenance, poor building design, to blame.... not the creator of the elevator. And who knew those elevator operators were just the Department store culture wrapped up in a uniform. I'll be mulling this over in my brain - thanks for the article!