The Chatbot Was the Warmup

What agents are about to do to work makes the last two years look like a pilot program.

Picture this. Your company just deployed Microsoft Copilot. People are using ChatGPT. Maybe you built our own LLM. Maybe you have a Gemini license sitting in your Google Workspace. Leadership calls it an AI strategy. Employees are typing questions into chat windows and getting answers faster than they used to. Productivity is up. The slide deck looks great.

And you are nowhere near ready for what comes next.

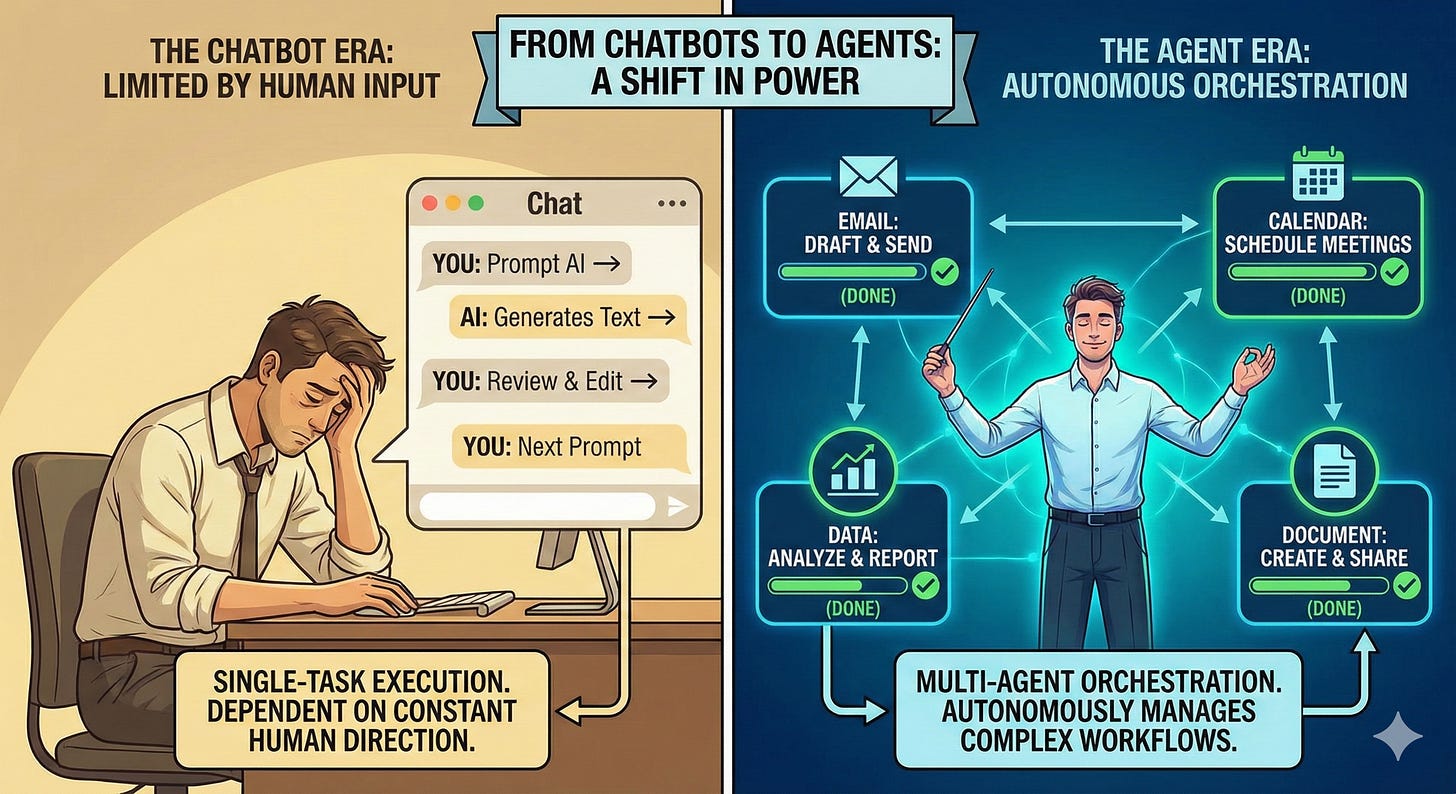

What you’ve deployed are chatbots. Brilliant, useful, genuinely impressive chatbots, but chatbots nonetheless. Tools that respond when spoken to and stop the moment you stop asking. The intelligence is real. The initiative isn’t. Every next step still requires a human to decide what it is. One person. One conversation. One task at a time.

Now contrast that with how I work today. At any given moment I have fifteen to twenty agents running simultaneously, each one handling a different thread of work. One is researching. One is drafting. One is monitoring. One is analyzing. One is following up on things I would have forgotten. I am not doing less work. I am doing more consequential work, because the execution layer has been delegated to agents that don’t sleep, don’t lose focus, and don’t need a meeting to get started.

That is not a more advanced version of using ChatGPT. That is a different relationship with work entirely.

The problem isn’t that people are using these tools wrong. The problem is that when you only ever use AI as a chatbot, you develop a chatbot-sized picture of what AI can do. It’s like being handed a light bulb and thinking electricity is about getting a little more light in the room. The light bulb is real, the improvement is real, and you’d miss it if it were gone. But you have no frame of reference for the electrical grid. For what happens when the whole system gets rewired. For how that same underlying power eventually runs your hospital, your factory, your city. People who are only experiencing AI as a chat window are not building the mental model they need to see what agents make possible.

You cannot prepare for something you cannot yet imagine.

What’s coming is something categorically different. And if you think the chatbot era changed things, you haven’t seen anything yet.

A Chatbot Talks. An Agent Works.

Here’s the simplest way to understand the difference.

A chatbot is a conversation. You ask it to draft an email, it drafts the email. You ask it to summarize a document, it summarizes the document. Then it waits. It has no idea what you need next, no ability to take the next step on its own, and no awareness that there even is a next step. You are still the project manager of your own work, using AI as a very fast assistant. One conversation. One output. Back to you.

An agent is a colleague you can delegate to. You give it a goal, and it builds the plan, executes the steps, monitors what’s working, adjusts when something doesn’t, and keeps going until the job is done. You don’t manage it turn by turn. You hand it an outcome and check back in. And critically, while that agent is working, you can hand nineteen other outcomes to nineteen other agents. All of them running. All of them working. You sitting at the center, not as the executor but as the orchestrator.

Here’s what that looks like in an ordinary week. You’re a marketing manager and you need a competitive analysis done, six social posts drafted, a vendor contract reviewed for red flags, and meeting notes from last week turned into action items. With a chatbot you do each of those things one at a time, prompting, waiting, reviewing, moving to the next. With agents you define all four outcomes, set them running simultaneously, and spend your morning on the thing that actually needs your brain, the strategy conversation with your client that no agent can have for you. You come back to four completed workstreams and spend your judgment on what matters, not your time on what doesn’t.

Or think about hiring. With ChatGPT or Copilot you might use AI to write job descriptions, then go post them yourself, screen resumes yourself, schedule interviews yourself, with AI helping you draft an occasional email along the way. The AI is useful. You are still doing the work. With an agent you define what a great hire looks like, set the guardrails, and the agent posts the roles, screens the applicants, schedules the interviews, sends the follow-ups, and surfaces the top candidates for your review. The human steps back from execution and steps into judgment.

That is not a subtle difference. That is a fundamental restructuring of how work happens, what a productive person looks like, and what organizations need to be built around.

Think of it this way. A chatbot is a brilliant colleague who only speaks when spoken to and stops working the moment you leave the room. An agent is a colleague you can hand a project to on Monday and trust that something meaningful will be done by Friday, without you managing every step in between. Now multiply that by twenty. That is the new unit of work, and most organizations have no framework for it yet.

A chatbot asks you what to do next. An agent decides what to do next.

This isn’t the same AI you’ve been playing with.

This Is Already Happening

This is not a prediction about 2028. Agents are being deployed right now, across industries, at scale.

Salesforce Agentforce is handling customer service workflows end to end, resolving cases without a human ever getting involved. ServiceNow agents are managing IT incidents from detection through resolution. Workday is rolling out agents that handle employee lifecycle events autonomously. Tools like Claude Cowork let you hand off complex multi-step tasks across your files and systems and come back to completed work. OpenClaw enables businesses to build custom agents that operate continuously in the background, handling processes that used to require dedicated headcount.

These aren’t beta experiments happening in tech companies. They’re in production, in organizations that look a lot like yours, doing work that humans were doing twelve months ago.

And here is the part that should stop you cold. The people running these agents aren’t working harder than everyone else. They’re working at a different level of leverage entirely. One skilled agent-orchestrator can produce what previously required a team, not because they’re smarter or more experienced, but because they’ve learned to multiply themselves through agents in a way most of their peers haven’t started thinking about yet.

The companies deploying these tools are not asking “how do we make our people more efficient?” They’re asking “what do we actually need our people doing once agents handle the rest?” That is a different question. It leads to different decisions. And the organizations asking it are pulling ahead in ways that will be very hard to close later.

Why Most Organizations Are Getting This Wrong

Most companies talk about AI strategy today and mean chatbot strategy. A Copilot license here. A ChatGPT Enterprise account there. A policy about what employees can and can’t paste into a chat window. That’s not a strategy. That’s procurement dressed up as transformation.

The mistake is treating chatbots and agents as points on the same continuum, as if agents are just more advanced chatbots you graduate to eventually. They’re not. They represent a different level of AI autonomy, built on different design principles, requiring different organizational capabilities to deploy well.

Most organizations are not preparing for that. They’re running Copilot training sessions and calling it AI readiness. They’re measuring how many employees have activated their AI tools and calling it adoption. They’re adding AI to existing processes and calling it transformation.

What they’re actually doing is automating dysfunction.

Taking broken processes, inefficient workflows, and outdated organizational structures and running them faster with AI. The output improves slightly. The underlying problem doesn’t move.

You can’t layer frosting on a moldy cake and call it a bakery.

At some point you have to deal with what’s underneath. The organizations that will win the agent era are not the ones with the most licenses. They’re the ones that did the harder work first: fixing the process, clarifying the outcome, and building the human judgment that knows when to trust the machine and when to take the wheel back.

Technology amplifies whatever already exists, the good and the broken. Deploy agents into a dysfunctional organization and you don’t get transformation. You get faster dysfunction at greater scale.

What This Means for You

Here’s the part most AI content skips, because it’s uncomfortable. What does this mean for regular people? Not executives. Not technology leaders. People with jobs they’re trying to keep and careers they’re trying to build.

The honest answer is that agents will take over a significant portion of what knowledge workers do today. Not all of it. Not the parts that require genuine human judgment, relationship, creativity, and contextual wisdom. But the execution layer, the tasks that are well-defined, repeatable, and process-driven, that’s where agents are going to land first and land hard.

Here’s the belief gap that concerns me most. When people only experience AI as a chatbot, they genuinely cannot see how it threatens or transforms their role. The chatbot helps them do their job a little better, so they conclude AI is a tool, not a transition. They’re not wrong about the tool. They’re wrong about the transition.

The light bulb didn’t tell you the electrical grid was coming. And the electrical grid didn’t ask permission before it changed everything.

When one person can orchestrate fifteen to twenty agents simultaneously, the math of employment changes in ways we are not prepared to discuss honestly. What happens to the team of twelve when one person with strong agent skills can do what they used to do collectively? What happens to entry-level roles that existed precisely because someone needed to do the execution work that agents now handle? What happens to the people who never get the chance to develop agent-orchestration skills because their organization didn’t invest in bringing them along?

Job displacement from AI is not a future risk. It is a present reality unfolding right now in white-collar work, in professional services, in creative fields, in roles that people were told were safe because they required human intelligence. The question society has to answer, and has not yet seriously engaged with, is what comes next for the people displaced, how we build new on-ramps into meaningful contribution, and who bears responsibility for that transition. Organizations cannot outsource that question to governments. Governments cannot outsource it to organizations. And neither can pretend the timeline is long enough to wait.

The people who will thrive in an agentic world are not necessarily the most technical. They’re the ones who can define outcomes clearly, who can evaluate AI work rather than just consume it, who can think in systems rather than tasks, and who can exercise judgment in the moments agents hand work back to humans. Those are learnable skills. But they don’t develop from a training module. They develop from practice, from experimentation, and from organizations that build the psychological safety to try things that might not work the first time.

Changefulness is the organizational muscle to live inside continuous change, to treat uncertainty as the operating condition rather than the exception.

The agent era is not a change to manage. It’s a condition to build for, starting now, for every person in your organization regardless of role or level.

The New Unit of Work Isn’t a Person. It’s a Person Plus Their Agents.

This is the part of the conversation most organizations haven’t started yet, and it’s the part that will define the next decade of work.

When I have fifteen to twenty agents running simultaneously, I am not a more productive version of a knowledge worker. I am a different kind of worker entirely. My contribution is no longer measured by what I execute. It’s measured by what I orchestrate, what outcomes I define, what judgment I bring to the moments agents hand back to me, and how effectively I build and deploy the network working on my behalf.

Making heads count used to mean getting the most out of your people. Now it means understanding that each person comes with a multiplier, and your job as a leader is to help them develop it.

That changes performance management in ways most organizations haven’t grappled with honestly. What does it mean to evaluate someone’s contribution when their output was generated by agents they orchestrated rather than work they executed themselves? How do you measure performance when one person running twenty agents produces ten times the output of a colleague doing nominally the same job manually? How do you build fair compensation structures around leverage ratios that vary enormously across your workforce? How do you define a team when the most productive member might have twenty agents running while their colleague is still working largely by hand?

These are not hypothetical future problems. They are landing on desks right now, without frameworks, without precedent, and without the language to even describe what’s happening.

And beyond the organization, what does this mean for society? Education systems built around training people to execute tasks are preparing students for a layer of work that agents are absorbing. Career ladders built around years of execution experience before reaching judgment-level roles are becoming obsolete faster than institutions can redesign them. Social safety nets built around the assumption of stable employment in clearly defined roles are not designed for a world where the nature of contribution is changing faster than policy can track.

These are not problems technology will solve. They are human problems requiring human choices, made by leaders, policymakers, and organizations willing to look honestly at what’s actually happening rather than what’s comfortable to acknowledge.

The Readiness Gap Nobody Is Talking About

We spent the last two years teaching people how to prompt. How to talk to chatbots. How to phrase questions to get better answers out of a tool that still depends entirely on human initiative to function. That was useful, and prompting still matters, but it prepared people for a world that’s already changing underneath them.

Working with agents requires a fundamentally different mindset. You don’t prompt an agent the way you prompt a chatbot. You define outcomes. You set guardrails. You think about what done looks like before the agent starts, because you won’t be there to redirect it every five minutes. You develop judgment about when to trust an agent’s output and when to interrogate it. You learn to design the handoff between human and machine, which is where most of the value and most of the risk actually live.

Stop calling it AI assistance. Start calling it done.

Then ask yourself honestly whether your organization knows how to hand work off at that level, measure it fairly, and bring every person along rather than leaving the slowest ones behind.

This is the Mindset-Heartset-Skillset-Toolset problem in its purest form. Most organizations jumped straight to Toolset. They bought the AI tools, deployed them, and hoped the mindset would follow. It doesn’t work that way. It has never worked that way. The transformation order has always been the same: Mindset first, then People, then Process, then Technology. The organizations that skip the first three and go straight to deploying agents will find out quickly that the technology amplifies whatever already exists, including every unresolved question about fairness, equity, and human development they chose not to address first.

Best practices from the chatbot era are someone else’s answers from a different moment. The agent era needs first principles thinking.

Where to Go From Here

If you lead an organization, the most important thing you can do right now is stop treating chatbots and agents as the same conversation. Brief your leadership team on the actual distinction. The language you use internally shapes the decisions you make, and most leadership teams are still talking about AI in ways that guarantee they underinvest in what actually matters. The chatbot era needed better prompters. The agent era needs better orchestrators, and building that capability requires a completely different investment than another round of AI literacy training.

Start mapping your workflows for agent-readiness before you have agents, because the organizations that think through the human-to-agent handoffs before deployment will implement in months what the unprepared will spend years unraveling. And when you deploy, rebuild your performance frameworks to match the reality of how work actually happens now. Measuring activity in an agentic world is like measuring how fast someone types. It captures the wrong thing entirely.

Most importantly, take the societal question seriously rather than leaving it to someone else. If your organization is deploying agents that displace work, you have a responsibility to think honestly about what happens to the people on the other side of that displacement, what new roles become possible, and how you help your workforce develop the skills to occupy them. This is not charity. It is the condition for building an organization that can sustain trust, attract talent, and operate with legitimacy in a world that is watching very closely how companies handle this transition.

If you’re an individual trying to figure out where you stand in all of this, start experimenting today rather than waiting for your organization to catch up. Use Claude Cowork to hand off a complex project and see what comes back. Explore OpenClaw and build a simple agent workflow with tools already available to you. Get comfortable with the experience of defining an outcome rather than managing a task. The intuition you build now will be worth more than any certification you could earn, because what the agent era rewards above everything else is judgment, and judgment only comes from doing.

The Close

The chatbot era gave us a glimpse of what AI could do when we learned to ask better questions. The agent era is going to show us what AI can do when we learn to trust it with the work itself, and what we become when the execution layer is no longer ours to carry.

That is an extraordinary opportunity and a profound responsibility at the same time. The opportunity is leverage that previous generations of workers could not have imagined. The responsibility is making sure that leverage lifts more people than it displaces, that the electrical grid gets built in a way that powers everyone’s house and not just the ones who already knew it was coming.

The light bulb was a miracle. The electrical grid changed civilization.

Most organizations are still marveling at the light bulb.

The grid is already being built. The question is whether you’re helping design it, or whether you’re going to look up one day and realize the power has been on for years and you’ve been sitting in the dark.

You haven’t seen anything yet.

The question is whether you’ll be ready when you do, and whether you’re bringing everyone with you when you get there.

Jason Averbook is a globally recognized thought leader, advisor, and keynote speaker focused on the intersection of AI, human potential, and the future of work. He is the Senior Partner and Global Leader of Digital HR Strategy at Mercer, where he helps the world’s largest organizations reimagine how work gets done — not by implementing technology, but by transforming mindsets, skillsets, and cultures to be truly digital.

Over the last two decades, Jason has advised hundreds of Fortune 1000 companies, co-founded and led Leapgen, authored two books on the evolution of HR and workforce technology, and built a reputation as one of the most forward-thinking voices in the industry. His work challenges leaders to stop seeing digital transformation as an IT project and start embracing it as a human strategy.

Through his Substack, Now to Next, Jason shares honest, provocative, and practical insights on what’s changing in the workplace — from generative AI to skills-based orgs to emotional fluency in leadership. His mission is simple: to help people and organizations move from noise to clarity, from fear to possibility, and from now… to next.

You can email at jasonaverbook@gmail.com or send message at LinkedIn to connect.

Great post Jason. I have seen this firsthand. What I learned is that it’s not enough to experiment with agents and tell your bosses it’s important. Build collaborations and create POCs so you can lead organizations forward. Waiting for executive review and permission is largely a waste of time.

Great post. Very useful for those of us with children coming into the world of work in the next few years